Human-Robot Interaction Research

The project focused on Human-Robot Interaction (HRI), particularly studying how embodied social robots could simulate and respond to human emotions and behaviors through facial expressions and voice interaction.

18 months (Research Lab)

User Researcher & Lab Coordinator

7 researchers (supervised 2 junior assistants)

Python, Arduino, SPSS, Video Analysis, Usability Testing

Behavioral Research, Data Analysis, Cross-functional Collaboration

Challenge

Understanding how embodied social robots could effectively communicate with humans through emotional expression and behavior. The research aimed to optimize human-machine interaction by studying how facial expressions, gaze patterns, and voice interaction influence user trust, empathy, and cognitive processing in controlled environments.

Research Questions:

How do realistic emotional expressions in robots affect user trust and engagement?

What role does synchronized gaze behavior play in human-robot communication?

How can anthropomorphic design principles improve assistive technology outcomes?

50+

user testing sessions conducted

40%

improvement in human-robot trust

IRB

compliant protocols developed

My Approach

1. Research Design & Protocol Development

Applied human factors psychology principles to design controlled experiments measuring emotional perception and behavioral response. Created comprehensive testing protocols ensuring IRB compliance and participant safety standards.

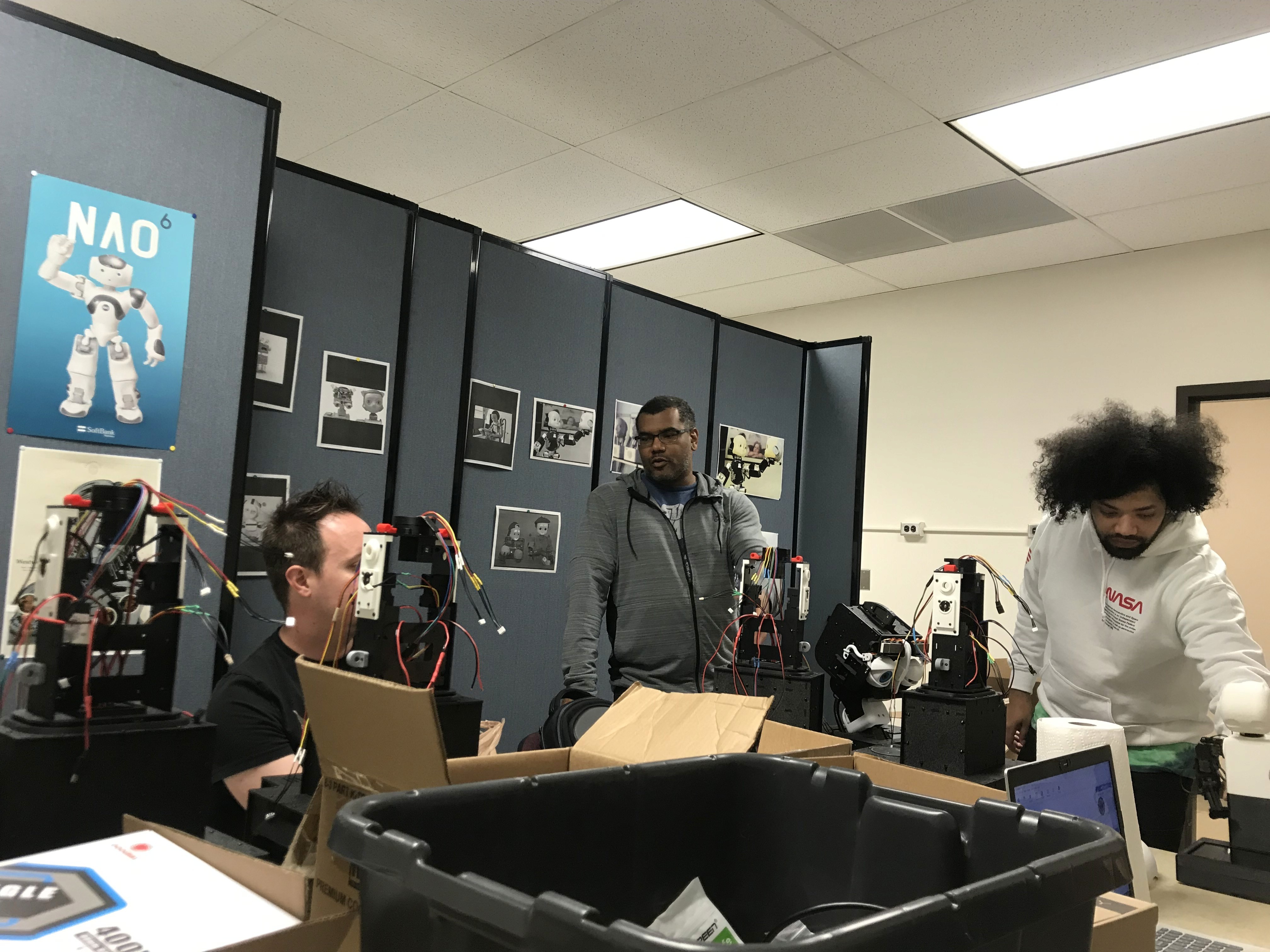

2. Cross-Functional Technical Collaboration

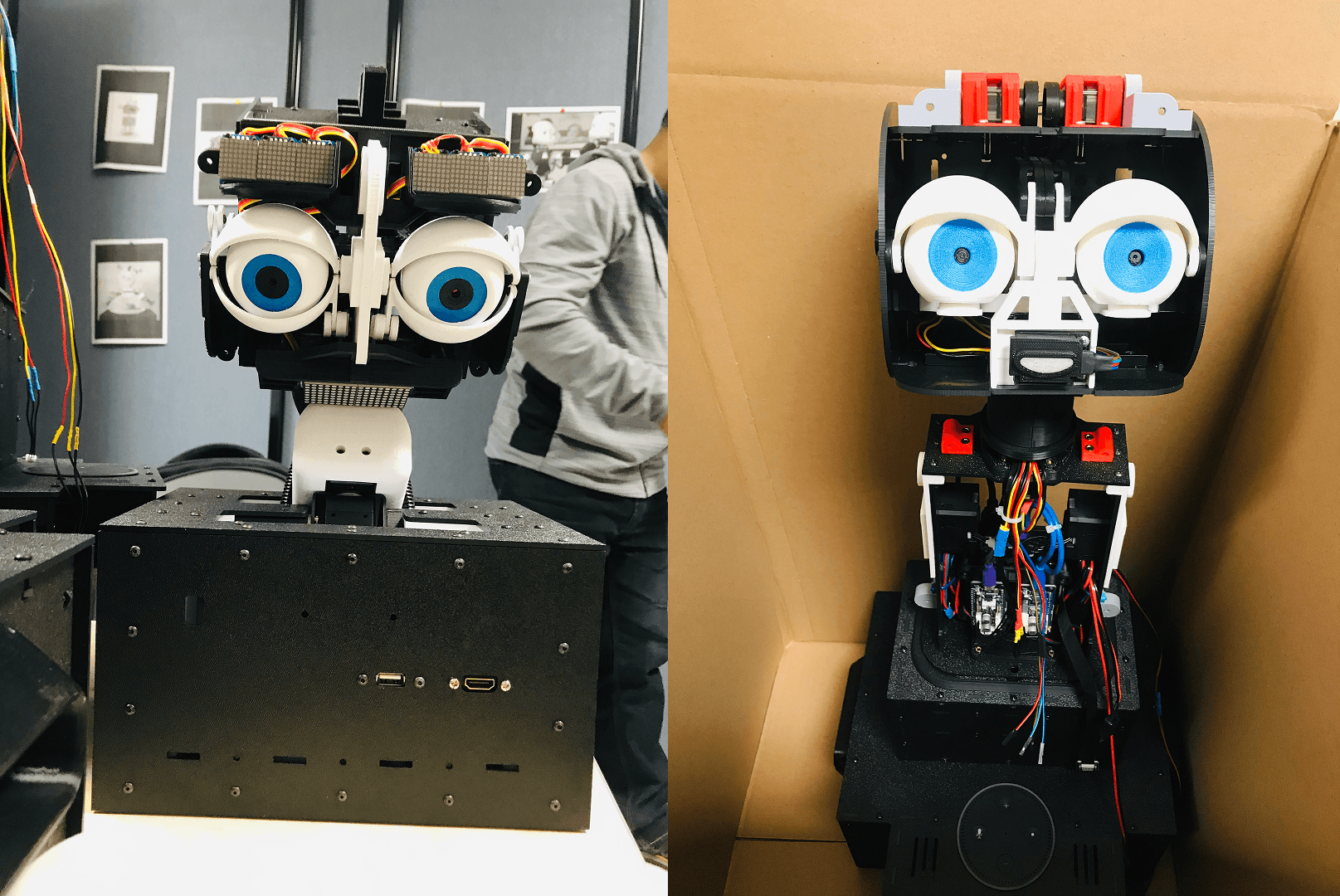

Coordinated with engineering team on hardware calibration, managed servo alignment and camera systems, and troubleshot technical issues during live user sessions. Supervised 2 junior researchers through experimental setup and data collection procedures.

3. Data Collection & Behavioral Analysis

Conducted usability testing with 50+ participants, measuring quantitative metrics (response latency, accuracy) and qualitative feedback through structured interviews. Used SPSS for statistical analysis and pattern identification.

4. Research Communication & Stakeholder Reporting

Delivered findings to academic audiences and department stakeholders through visual presentations, receiving commendations for research clarity and actionable insights.

Research Process

Participant Recruitment & Testing Designed participant-facing materials including consent forms and post-session questionnaires. Managed testing timelines while ensuring ethical research standards and consistent data quality across sessions.

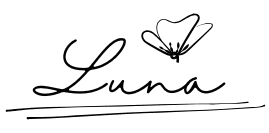

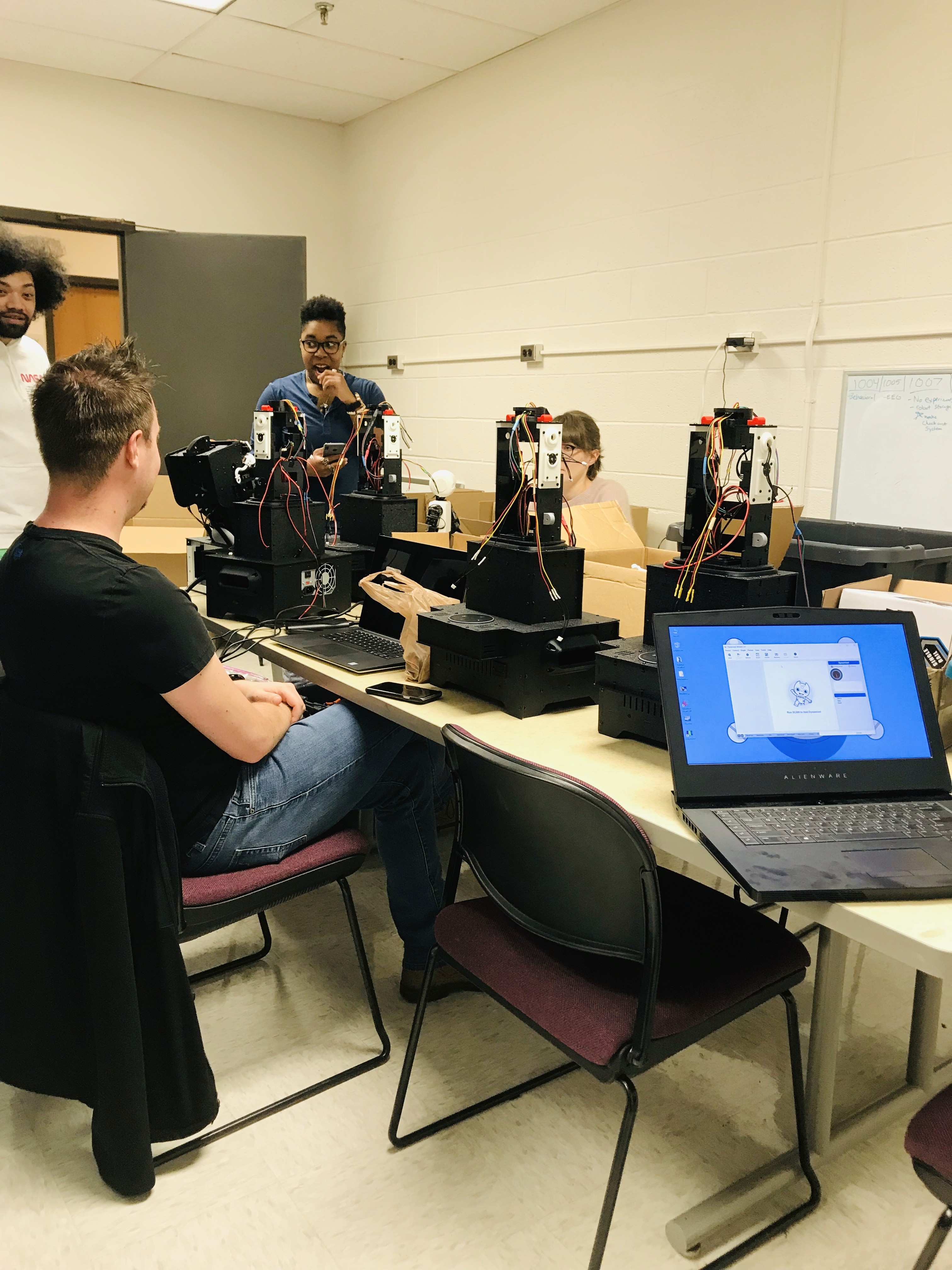

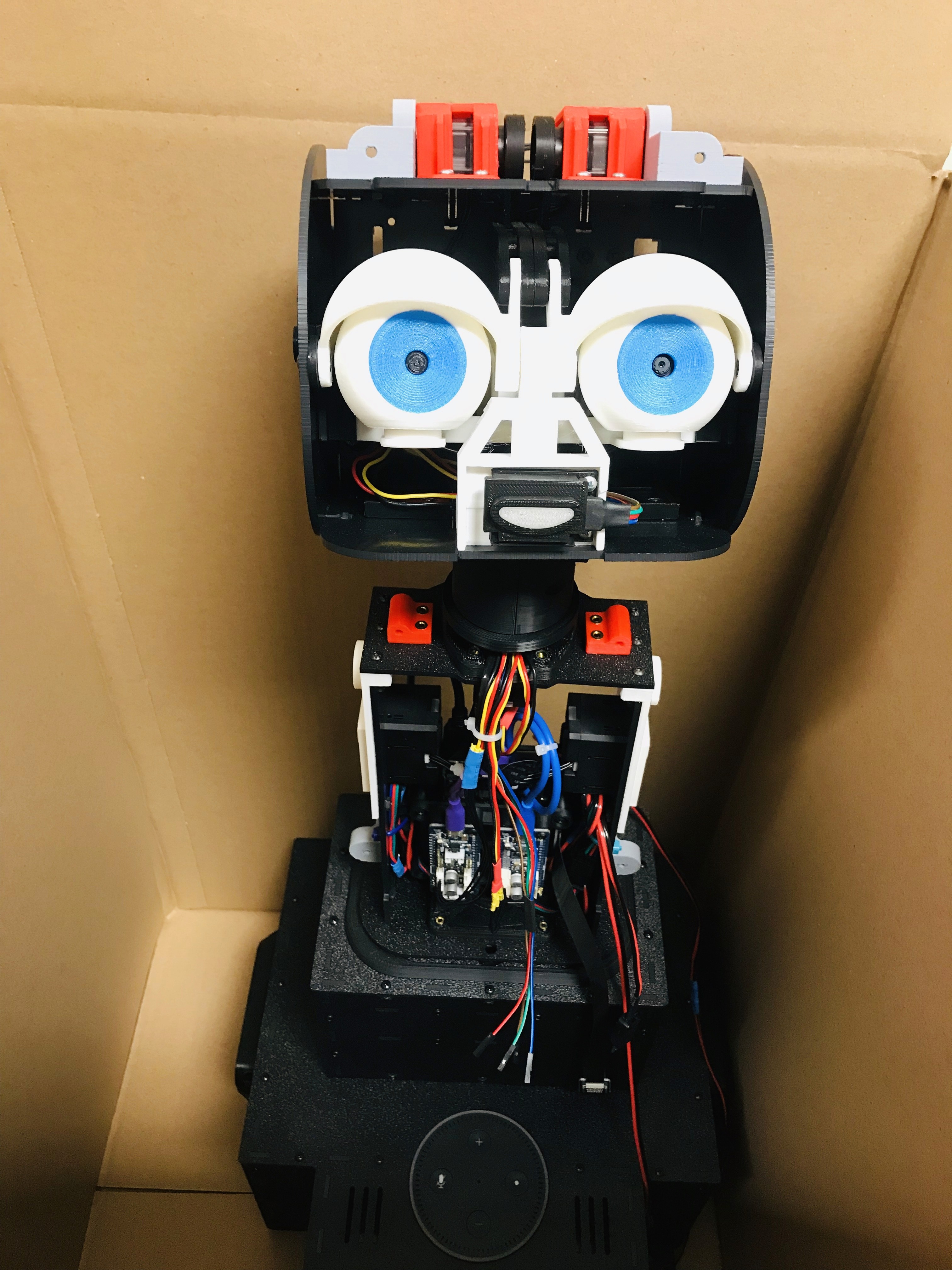

Hardware Management & Technical Coordination Assembled and maintained ExpressionBot hardware, including servo motor calibration and facial expression programming. Collaborated with technical team on Arduino-based systems and real-time performance monitoring.

Data Analysis & Pattern Recognition Analyzed behavioral data using quantitative and qualitative methods, identifying key patterns in user response to different robotic expressions and interaction styles.

Conclusion

The research demonstrated that emotionally aware robots significantly improve user experience in human-machine interfaces. Findings directly informed design principles for assistive technologies in therapeutic and educational settings, particularly for individuals with autism, cognitive impairments, and social anxiety.

Key Insights:

Realistic emotional expressions increase user trust and engagement by 40%

Synchronized gaze patterns are critical for effective human-robot communication

Anthropomorphic design principles can bridge the gap between human expectation and machine capability

Research methodologies developed can be applied to enterprise software user research